From AI Intern to AI Employee

Previously in the Series

In the previous articles, we explored how AI Agents can transform business operations from a bakery CEO’s perspective, showcased the data workflow, and built the whole thing with CloudFormation and SAM.

Our CEO’s AI Intern graduated, showed up on day one, received an Intern’s Laptop (Action Groups), followed the Intern’s Guide (Instructions), and started answering questions like “How did we do with the hospitality business in the first half of the year?” It worked.

But here’s the thing about Interns — they eventually need a promotion.

The Intern’s Problem

Remember how we built the Intern? The Intern’s Laptop (tooling) was an “OpenAPI schema” (it is a custom JSON schema; for the illustration, I’ll call it OpenAPI) wired to a separate Lambda function. The Intern’s Guide (instructions) lived in a CloudFormation YAML. The University the Intern graduated from (Foundation Model) was hardcoded to a Bedrock ARN. Testing meant deploying to AWS and hoping for the best.

Want to change the University? Redeploy the stack. Want to give the Intern a new tool? Write a new Lambda, define a new Action Group, update the OpenAPI schema, and create a new agent version. Want to test locally before onboarding? Not an option — this Intern only exists in the AWS console.

Our Intern was effective but inflexible. Tied to one office, one desk, one rigid set of procedures.

It’s time for a promotion. The Intern is becoming an Employee — portable, testable, and ready for the real world. We’re migrating the CEO Financial Advisor from native Bedrock Agents to the Strands Agents SDK with AWS AgentCore Runtime.

And along the way, we’ll answer the question every serverless enthusiast is asking: Is Lambda dead for AI Agents? Is the Docker container (ECR) suddenly hot again?

Note 1. If you don’t feel comfortable with the code, I still recommend you compare the original bedrock-agent-stack.yaml with the new Strands-based implementation. Both live in the same repository so you can see the evolution side by side.

Note 2. The full repository: https://github.com/javatask/ai-agent-ceo-fin-advisor — the original native Bedrock Agent is in the root, and the Strands migration is in the

strands/folder.

Why Promote the Intern? Five Reasons to Migrate

1. The Employee Can Switch Universities

Native Bedrock Agents are bound to models within the Bedrock ecosystem. If the CEO wants a different “University” — a different model provider — the whole agent needs restructuring.

Strands is model-agnostic. The Employee can use Claude Sonnet 4 via Bedrock in production, prototype with a local Llama model on a developer’s laptop via Ollama, and evaluate OpenAI through LiteLLM next quarter — all with the same code. No vendor lock-in. The Employee picks the best University for each task.

# Production: Bedrock

agent = Agent(model="us.anthropic.claude-sonnet-4-20250514-v1:0", ...)

# Local development: Ollama

from strands.models.ollama import OllamaModel

agent = Agent(model=OllamaModel(host="http://localhost:11434"), ...)

# Evaluation: OpenAI via LiteLLM

from strands.models.litellm import LiteLLMModel

agent = Agent(model=LiteLLMModel(model_id="gpt-4o"), ...)

Same Employee, different Universities, zero code changes to tools or instructions.

2. The Employee’s Laptop is Just Python

This is where the migration pays for itself in the first week.

Remember the Intern’s Laptop? It required an OpenAPI schema for every tool, a separate Lambda function as the executor, an Action Group configuration, and a CloudFormation resource wiring it all together. Adding a single tool meant touching four or five files across two repositories.

The Employee’s Laptop is a Python function with a decorator.

Before — The Intern’s Laptop (Native Bedrock Agents):

# 1. Lambda function (separate file, separate SAM stack)

# 2. OpenAPI-style schema in CloudFormation:

ActionGroups:

- ActionGroupName: financial-analysis-actions

ActionGroupExecutor:

Lambda: !Ref LambdaFunctionArn

FunctionSchema:

Functions:

- Name: analyze_industry_performance

Description: |

Analyzes financial performance metrics across a network of bakeries...

Parameters:

date_from:

Type: string

Description: date from in format YYYY-MM-DD

Required: true

date_to:

Type: string

Description: date to in format YYYY-MM-DD

Required: true

industry:

Type: string

Description: industry for each need to do analysis

Required: true

After — The Employee’s Laptop (Strands Agents):

from strands import Agent, tool

@tool

def analyze_financial_performance(industry: str, date_from: str, date_to: str) -> str:

"""

Analyze financial metrics for a specific industry and date range.

Args:

industry: Industry segment (schools, cafes, shops, factories, restaurants, hotels)

date_from: Start date in YYYY-MM-DD format

date_to: End date in YYYY-MM-DD format

Returns:

Financial analysis summary with bookings, billings, and billing rates

"""

data = generate_industry_data(industry, date_from, date_to)

return format_analysis(data)

No OpenAPI schema. No separate Lambda. No Action Group. The @tool decorator turns any Python function into an agent tool. The docstring is the tool description that the LLM reads — the same detailed description we gave the Intern, but now it lives right next to the code it describes.

Strands has been called the “Flask of AI Agents” — and after migrating, I understand why. The same simplicity that Flask brought to web development, Strands brings to agent development.

3. The Employee Can Lead a Team

Native Bedrock Agents are optimised for single-Intern tasks. Our CEO had one Intern analyzing finances. But what if the CEO needs an entire team?

Strands provides built-in patterns for multi-agent architectures:

Swarm Patterns — Dynamic, autonomous handoffs between Employees. The financial analyst realises a compliance check is needed and hands it off to the compliance specialist automatically.

Agent Graphs — Structured flowcharts where Employees determine execution paths based on intermediate results.

Agent-as-Tool — Hierarchical delegation where a “Head of Finance” Employee calls specialised sub-Employees as if they were ordinary tools on a Laptop.

For the bakery CEO, this means a future where one request like “Prepare the quarterly board report” triggers a team: an Industry Analyst gathers data, a Compliance Checker validates numbers, and a Report Writer assembles everything into a polished deliverable.

4. The Employee Explains Their Thinking

Debugging the Intern was painful. The reasoning steps were hidden behind a service boundary. You could see the final output and the tool invocation logs, but the intermediate thinking — why the Intern chose one tool over another, how it interpreted the CEO’s ambiguous question about “hospitality” mapping to industry=hotels — was largely opaque.

The Employee, powered by Strands with AgentCore, emits native OpenTelemetry traces for every model invocation, tool call, and reasoning step. You get 100% traceability of decisions. In practice, teams report up to 50% faster problem resolution simply because they can see what the Employee is thinking.

When the CEO asks, “Why did the report show different numbers than last time?” — you can trace every step the Employee took to produce that answer.

5. The Employee Gets a Proper Office (AgentCore)

Migrating to Strands is often a stepping stone to AWS AgentCore, which cleanly separates the “Employee’s brain” (Strands) from the “Employee’s office” (AgentCore):

Persistent Memory — Sophisticated episodic (short-term) and semantic (long-term) memory. The CEO can say, “Compare this to what you told me last quarter,” and the Employee remembers. Far more robust than the Intern’s basic session persistence.

Policy Guardrails — A Cedar-based policy engine that enforces deterministic security boundaries outside the Employee’s reasoning loop. Think: “Block all financial projections that exceed 3-year horizons” or “Never share salary data outside the C-suite session.”

Scaling and Isolation — MicroVM-level session isolation and automatic scaling. One Employee’s failure never cascades to others. Each CEO session runs in complete isolation.

When the Intern Still Shines

Before we go further, I don’t want to give the impression that native Bedrock Agents should be abandoned. They shouldn’t. In fact, Bedrock Agents remain the fastest way to go from zero to a working AI Agent.

If you’re a CEO who just had the conversation with your daughter from the first article and want to see an Intern in action this afternoon, native Bedrock Agents are unbeatable:

No code required for the first experiment — The AWS Console lets you define an agent, attach a Knowledge Base, and test it in the playground without writing a single line of code.

Built-in Action Groups with Lambda — For teams already comfortable with Lambda, the OpenAPI schema approach gives you a structured, well-documented way to wire tools.

Managed versioning and aliases — The Bedrock console handles agent versions, aliases, and rollbacks out of the box. No Docker, no ECR, no CDK.

Low barrier to value — You can prove the concept to stakeholders in hours, not days. That first “wow, it understood my question and mapped hospitality to hotels” moment matters.

The Intern is the right choice when you’re exploring whether AI Agents fit your business at all. The Employee is the right choice when you already know they do and you need to scale, customise, and operate them in production.

Think of it this way: you wouldn’t hire a full-time Employee before you’ve validated the role with an Intern. Native Bedrock Agents are that validation step. Strands with AgentCore is what comes after.

What Changed: The Migration Map

The Architecture, Before and After

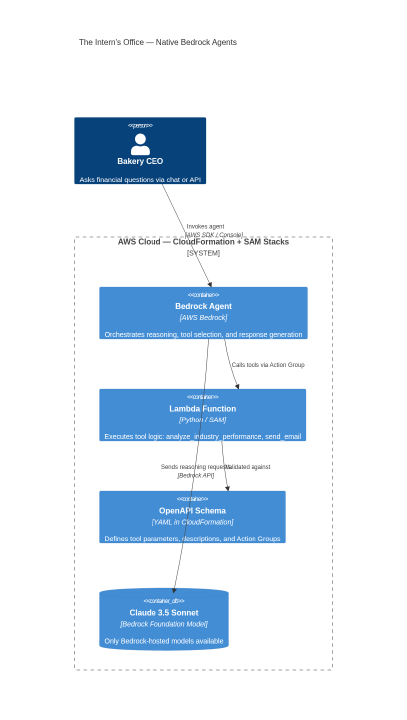

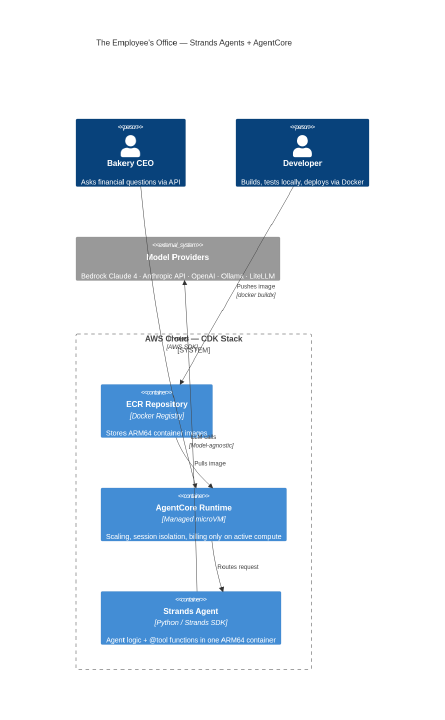

Before — The Intern’s Office:

After — The Employee’s Office:

The key difference: the Employee’s brain and Laptop now live in the same container. No more Lambda cold starts for tool calls. No more OpenAPI schemas. No more version management through the Bedrock console. Everything is code, everything is testable, everything ships with ./deploy_image.sh.

The Big Question: Is Lambda Dead for Agents?

Short answer: No, but it lost the default position.

Lambda was the hero of serverless for a decade. I used it for the Intern’s tooling in the previous article. But for AI Agents specifically, Lambda’s billing model creates an uncomfortable economic reality.

The I/O Wait Problem

An AI Agent is not a traditional Lambda workload. When the CEO asks “How did cafes perform in Q1 2024?”, the Employee does this:

Receive prompt → 1ms

Call LLM for reasoning → wait 3–8 seconds

Execute tool (analyse data) → 50ms

Call LLM for reflection and formatting → wait 3–8 seconds

Return response → 1ms

In a 15-second interaction, the function spends roughly 70% of its time waiting for the LLM to think. With Lambda, you pay for every millisecond of that wait. With AgentCore Runtime, I/O wait time when the CPU is idle is not billed.

Think of it this way: Lambda charges rent while the Employee sits and thinks. AgentCore only charges when the Employee acts.

The Economics, Side by Side

A Concrete Example

Consider our bakery CEO’s Employee processing 100 questions per day, each averaging 12 seconds of execution with roughly 8 seconds of LLM wait time:

Lambda (1024 MB, ARM64):

Duration billed: 100 × 12s × 1 GB = 1,200 GB-seconds/day

Monthly: ~36,000 GB-seconds

But 24,000 of those GB-seconds are pure waiting

AgentCore:

Only the ~4 seconds of active computation per request is billed

Monthly active compute is roughly 60–70% lower

At 100 requests/day, the difference is a few dollars. At 10,000 requests/day with complex multi-step reasoning chains, the gap becomes material.

When Lambda Still Makes Sense

Lambda isn’t dead — it’s specialised. Choose Lambda when:

Single-shot, low-latency tasks — Quick classification or extraction that completes in under 3 seconds with minimal LLM wait time.

High volume, tiny tasks — Millions of simple operations where the 1 million free requests/month tier dominates the economics.

Existing infrastructure — Your organisation has a mature Lambda CI/CD pipeline, and the migration cost exceeds the runtime savings.

Choose AgentCore when:

Complex multi-step reasoning — The Employee loops through multiple LLM calls and tool invocations. You refuse to pay rent while they think.

Long-running workflows — Anything beyond Lambda’s 15-minute ceiling: research reports, multi-industry comparisons, deep analysis.

Memory-heavy agents — Built-in episodic memory beats manually wiring DynamoDB.

Compliance requirements — Cedar-based policy guardrails and session isolation are essential for regulated environments.

The Verdict: ECR Is Hot Again

The container renaissance for AI workloads is real. ECR + AgentCore gives you:

ARM64 Graviton for cost-efficient compute

Local development parity — the same Docker image runs on your laptop and in production

No cold starts that compound with LLM latency

A billing model designed for agentic workloads, not request-response APIs

For serious agentic AI, the Lambda-first reflex needs a rethink. Containers are the natural home for Employees that think, wait, reason, and think again.

First Day on the Job: Deploying the Employee

Just like we walked through the Intern’s deployment, here’s how the Employee starts work. And this time, you can test locally before the Employee ever touches AWS.

Prerequisites

# Python package manager

curl -LsSf https://astral.sh/uv/install.sh | sh

# AWS CDK CLI

npm install -g aws-cdk

# Docker buildx (for ARM64 builds)

docker buildx create --use

Step 1: Test Locally (The Intern Could Never)

# Clone and install

git clone https://github.com/javatask/ai-agent-ceo-fin-advisor.git

cd ai-agent-ceo-fin-advisor/strands

uv sync

# Run the Employee locally

uv run python run_agent.py

# In another terminal — ask the same question as the Intern

curl -X POST http://localhost:8080/invocations \

-H "Content-Type: application/json" \

-d '{"prompt": "How did we do with the hospitality business in the first half of the year?"}'

The Employee answers on your laptop. No AWS deployment. No waiting for CloudFormation. No prepare-agent step.

Step 2: Deploy Infrastructure

cd cdk

cdk bootstrap # First time only

cdk deploy # Creates ECR repo + IAM role

Step 3: Ship the Employee to AWS

cd ..

./deploy_image.sh <ecr-repository-uri-from-cdk-output>

Step 4: Create the Employee’s Office

This is the step where we actually create the AgentCore Runtime — the managed environment that will run our container. Think of Steps 2–3 as building the office and moving the furniture in (ECR repo + Docker image). Step 4 is handing the keys to AgentCore and saying “run this.”

Why is this a separate script and not part of the CDK stack? At the time of writing, AgentCore Runtime doesn’t have native CloudFormation support yet. You can automate it via a CDK Custom Resource backed by a Lambda (the stack.py in the repo has a commented-out example), but for a first deployment, a simple boto3 script is clearer and easier to debug.

python3 << 'EOF'

import boto3

client = boto3.client('bedrock-agentcore-control', region_name='us-east-1')

cfn = boto3.client('cloudformation', region_name='us-east-1')

stack = cfn.describe_stacks(StackName='StrandsAgentStack')['Stacks'][0]

outputs = {o['OutputKey']: o['OutputValue'] for o in stack['Outputs']}

response = client.create_agent_runtime(

agentRuntimeName='ceo_fin_advisor_v2',

agentRuntimeArtifact={

'containerConfiguration': {

'containerUri': f"{outputs['ECRRepositoryUri']}:latest"

}

},

networkConfiguration={'networkMode': 'PUBLIC'},

roleArn=outputs['AgentRoleArn']

)

print(f"Runtime ARN: {response['agentRuntimeArn']}")

EOF

Step 5: Ask the Same Question

import boto3, json

client = boto3.client('bedrock-agentcore', region_name='us-east-1')

response = client.invoke_agent_runtime(

agentRuntimeArn="<runtime-arn-from-step-4>",

runtimeSessionId="session_12345678901234567890123456789012",

payload=json.dumps({

"prompt": "How did we do with the hospitality business in the first half of the year?"

})

)

result = json.loads(response['response'].read())

print(result['result']['content'][0]['text'])

Same question. Same CEO. Smarter Employee.

Giving the Employee a New Tool: Before and After

Remember the pain of adding a tool to the Intern? Let’s say the CEO now wants to compare two industries side by side.

Before — Giving the Intern a New Tool:

Write Lambda function code for the new tool

Add SAM resource for the new Lambda (or update the existing one)

Add function definition to the OpenAPI-style schema in CloudFormation

Update or create a new Action Group in the Bedrock Agent config

Deploy CloudFormation:

aws cloudformation deploy ...Prepare agent:

aws bedrock-agent prepare-agent --agent-id $AGENT_ID

After — Giving the Employee a New Tool:

- Write the function:

@tool

def compare_industries(industry_a: str, industry_b: str, year: int) -> str:

"""Compare financial performance between two industries for a given year.

Args:

industry_a: First industry to compare

industry_b: Second industry to compare

year: Year to analyze (e.g. 2024)

"""

data_a = generate_industry_data(industry_a, f"{year}-01-01", f"{year}-12-31")

data_b = generate_industry_data(industry_b, f"{year}-01-01", f"{year}-12-31")

return format_comparison(data_a, data_b)

- Add it to the Employee’s Laptop:

agent = Agent(

model="us.anthropic.claude-sonnet-4-20250514-v1:0",

tools=[analyze_financial_performance, send_email_report, compare_industries]

)

Test locally:

uv run python run_agent.pyDeploy:

./deploy_image.sh <ecr-uri>

Four steps instead of six. Zero infrastructure configuration. No prepare-agent ritual. The productivity gain is not incremental — it is transformational.

The CDK Stack: The Employee’s Office Lease

For those who want to see the infrastructure, here’s the complete CDK stack. Compare this to the original bedrock-agent-stack.yaml — It’s significantly simpler because the agent logic lives in the container, not in CloudFormation.

# ECR Repository — where the Employee's container image lives

ecr_repo = ecr.Repository(

self, "AgentRepository",

repository_name="ceo-fin-advisor-strands",

removal_policy=RemovalPolicy.DESTROY,

)

# IAM Role — the Employee's permission badge

agent_role = iam.Role(

self, "AgentRuntimeRole",

assumed_by=iam.ServicePrincipal("bedrock-agentcore.amazonaws.com"),

)

# Bedrock model access — which Universities the Employee can consult

agent_role.add_to_policy(iam.PolicyStatement(

actions=["bedrock:InvokeModel", "bedrock:InvokeModelWithResponseStream"],

resources=[

f"arn:aws:bedrock:{self.region}::foundation-model/*",

"arn:aws:bedrock:*:*:inference-profile/*"

],

))

# ECR pull — so AgentCore can fetch the Employee's container

ecr_repo.grant_pull(agent_role)

Three resources. One cdk deploy. The Employee is ready. And still under GitOps control — the same best practice we established with the Intern.

What’s Next for the Employee

The migration to Strands is not just a framework swap — it’s a foundation for the Employee’s career growth:

Multi-Agent Team — A “Head of Finance” Employee that delegates to specialised Industry Analysts using the Agent-as-Tool pattern. One CEO question, multiple Employees collaborating.

Persistent Memory — AgentCore’s episodic memory so the CEO can say, “Compare this to what you told me last quarter,” and the Employee actually remembers.

Policy Guardrails — Cedar-based rules that enforce financial reporting compliance outside the Employee’s reasoning loop. No more hoping the LLM follows safety instructions.

CI/CD Pipeline — Automated testing and deployment so Employee updates go from git commit to production in minutes. True GitOps for AI Agents.

Conclusion

The Intern served us well — and still serves others well. For teams exploring AI Agents for the first time, native Bedrock Agents remain the fastest path from idea to working prototype. That console-driven, no-code-required first experiment is genuinely valuable. Don’t skip it.

But once you’ve validated the concept and the CEO is asking for more tools, more industries, faster iteration, and production reliability, the Intern needs a promotion. The Employee, built on Strands Agents and deployed via AgentCore, is flexible. It runs locally, switches models, adds tools in minutes, explains its reasoning, and lives in a container that runs the same everywhere.

And perhaps most importantly: the Employee’s office (AgentCore) doesn’t charge rent while the Employee is thinking. For agentic AI workloads where 70% of execution time is LLM wait, that changes the economics fundamentally.

The Intern has graduated. The Employee has arrived. And they brought their own container.

The complete repository is at github.com/javatask/ai-agent-ceo-fin-advisor. The original native Bedrock Agent lives in the root; the Strands migration is in the strands/ folder. Compare them side by side — the difference speaks for itself.