Bridging the Gap: MCP vs. AWS Bedrock for Industrial AI Integration

Introduction: The Connection Challenge

As organizations increasingly deploy AI agents as “digital employees” in industrial environments, a critical question emerges: how do we effectively connect these agents to the data sources they need to function? This article explores two primary strategies for bridging this gap and examines when each approach delivers optimal value.

The challenge is not merely technical, but strategic. Organizations must choose between standardized, interoperable solutions and proprietary, tightly integrated frameworks. Each approach offers distinct advantages and limitations that directly impact return on investment, security posture, and operational effectiveness.

We will examine two main strategies:

- Using the Model Context Protocol (MCP) as a standardized approach for connecting large language models to data sources

- Custom frameworks, such as AWS Bedrock Agents, for proprietary integration

Our previous articles established two fundamental principles. First, generative AI functions as a skilled digital worker, requiring clear procedures and comprehensive instructions to perform specific actions effectively. Second, data serves as the foundation of trust between digital workers and human operators — when AI agents provide recommendations, human operators need confidence in the underlying data analysis, which comes from comprehensive longitudinal data that enables transparent, explainable recommendations backed by historical patterns.

The Industrial Scenario: Network Troubleshooting

To illustrate both approaches, we return to our network troubleshooting scenario from the first article. The problem begins with a simple question: “Why can’t the PLC connect to New Sensor 2?”

When a human network engineer approaches this problem, they follow a systematic methodology: gathering fundamental information (network topology, device inventory, access credentials), validating basic connectivity, then examining configuration details by comparing VLAN settings, IP assignments, and routing tables across devices.

This process requires access to multiple information sources: network documentation, device manuals, configuration management databases, and direct device interfaces. The engineer must correlate information across these sources to identify the root cause and implement a solution.

The MCP Approach: Standardized Integration

MCP Architecture

The Model Context Protocol offers a standardized method for connecting AI agents to data sources. In our network scenario, this requires network equipment vendors to provide MCP servers — either hosted directly on network devices or as customer-deployed applications with device credentials.

The MCP Server implementation includes three key components:

Tools: Executable functionality exposed to clients — for example, CLI connections for retrieving network topology and port status information.

Resources: Data and content that can be read by clients and used as context for LLM interactions — for example, web UI manuals that enable the AI agent to generate valid steps for specific network devices.

Prompts: Reusable prompt templates and workflows — for example, standardized VLAN information queries and port configuration checks.

Users configure MCP clients (such as Claude Desktop) to connect to one or more MCP servers, creating a unified ecosystem of AI-accessible resources.

Figure 1. MCP host-client-server communication architecture.

Real-World Implementation: Belden’s HiOS Documentation via MCP Server

Belden’s journey with MCP began with a specific challenge: making our extensive HiOS (Hirschmann Operating System) documentation accessible to AI agents for network troubleshooting. HiOS powers our industrial networking equipment, and its documentation represents years of engineering expertise covering complex networking protocols, device configurations, and troubleshooting procedures.

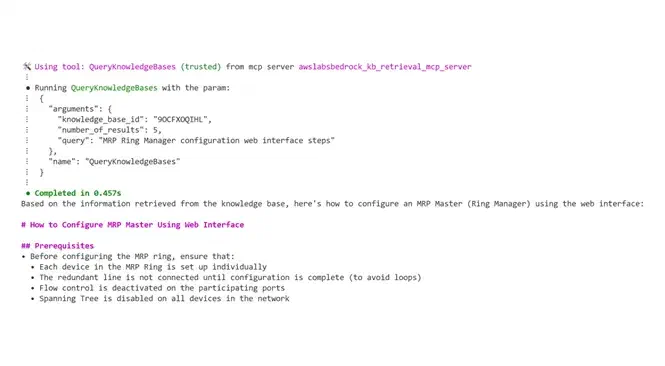

Our implementation leveraged AWS Knowledge Bases to create an agentic RAG (Retrieval Augmented Generation) system with advanced preprocessing techniques. We used the open-source Bedrock Knowledge Base Retrieval MCP Server from AWS Labs, which provided a standardized interface between MCP clients and our corporate AWS infrastructure — allowing us to maintain our existing AWS SSO security framework while exposing documentation capabilities to AI agents.

The AWS Knowledge Bases foundation proved crucial. Rather than relying solely on general foundation model knowledge, our system could retrieve specific, proprietary information from HiOS documentation to improve response relevancy and accuracy. When network engineers asked questions about device configurations or troubleshooting procedures, the AI agent could search our comprehensive documentation repository and provide responses backed by authoritative sources with proper citations.

The Technical Reality: Challenges and Complexity

However, our implementation revealed significant practical challenges. The technology proved highly technical, requiring sophisticated host applications like Claude Desktop to function effectively. This presented immediate friction in our Microsoft Office-centric environment, where most users rely on familiar tools like Amazon Q Pro and Q Pro CLI for daily workflows.

Installation complexity emerged as a major barrier. Each workstation required installing Python or Node.js client programs — straightforward for developers but a significant challenge for network engineers and operators without programming backgrounds.

Credential management added another layer of complexity. While our corporate AWS SSO setup provided elegant authentication for users already familiar with AWS workflows, extending access to field engineers and operators will require additional configuration and training.

The JSON configuration requirements created significant user experience friction. Engineers accustomed to graphical interfaces often find manual configuration editing error-prone and intimidating. Fortunately, Claude Desktop’s introduction of Desktop Extensions (DXT files) on June 26, 2025, has dramatically simplified this process, allowing one-click installation and configuration.

MCP: Characteristics and Strategic Trade-offs

The MCP approach offers compelling advantages for organizations with specific requirements and capabilities.

Transparency stands out as a key benefit — all tools, prompts, and resources remain visible to users, providing grounds for inspection and customization of AI agent capabilities. This visibility is crucial for compliance requirements and debugging complex interactions, particularly in industrial environments where understanding system behavior is essential for safety and reliability.

Vendor independence represents another strategic advantage, though with important practical limitations. Organizations can theoretically use any compatible LLM, reducing dependence on specific AI providers. However, our experience revealed that prompts require significant optimization for different models, limiting practical portability.

Modular development is perhaps the most immediately practical benefit. Teams can develop specialized MCP servers independently — one for network analysis, another for documentation retrieval, a third for security procedures. This separation allows expertise to be focused where most effective within different teams, while maintaining a consistent interface for AI agents.

However, MCP also presents significant challenges. Infrastructure complexity increases substantially — organizations must deploy and maintain MCP server infrastructure, handle updates, monitor performance, and uphold high availability across multiple servers.

Authentication considerations multiply in distributed MCP environments. Each server requires its own authentication and authorization mechanisms, creating additional security considerations and potential points of failure.

The reality of LLM interoperability also falls short of theoretical promises. Despite vendor independence claims, maintaining multiple versions of prompts for different AI providers adds operational overhead that organizations must handle.

Optimal Use Cases for MCP

MCP excels for organizations with publicly available documentation, standardized procedures, and minimal intellectual property protection concerns. Network device documentation, CLI command references, and standard troubleshooting procedures represent ideal applications where transparency and standardization provide clear benefits.

Organizations with strong internal development capabilities and preferences for open standards will find MCP particularly appealing. MCP also works well for those requiring maximum transparency and auditability, as the visibility of all tools, prompts, and resources allows for detailed inspection — crucial for regulatory compliance and operational safety in industrial environments.

The Custom Framework Approach: AWS Bedrock Agents

Beyond Building Blocks: When Customers Need Skilled Digital Workers

Although MCP serves as an excellent building block for AI integration, it represents a foundation rather than a complete solution. When customers need a system that functions as a skilled digital worker capable of complex reasoning, they must consider whether to assemble multiple MCP servers or bet on a custom framework designed for end-to-end AI applications.

The decision often comes down to practical realities. Consider our network troubleshooting scenario: a single web UI manual referenced in our MCP implementation contains over 1,000 pages of technical documentation. Across our product line, we maintain hundreds of such manuals. If we wanted to offer customers deep expertise across all these domains through MCP, we would need to provide potentially thousands of individual tools through multiple servers.

Several critical challenges surface: when an LLM encounters hundreds or thousands of available tools, it can become overwhelmed and struggle to select the most appropriate actions. In enterprise networks with hundreds of devices, each potentially hosting its own MCP server, host systems must manage complex credential schemes while ensuring secure access patterns. Most importantly, allowing LLMs to issue configuration commands directly via CLI when only data retrieval is needed carries unacceptable security implications.

Implementation Architecture

Custom frameworks like AWS Bedrock Agents take a fundamentally different approach, moving the complexity of managing security, hosting, data processing, and AI coordination into a managed platform. Rather than asking customers to configure and maintain distributed systems, this approach consolidates all elements into a secure and reliable solution — data, processing, and AI agents become tightly integrated to offer secure, timely, and cost-effective solutions precisely when and where customers need them.

Belden’s AWS Bedrock Success: MRP Troubleshooting Agent

Belden developed an internal MRP (Media Redundancy Protocol) troubleshooting agent that clearly demonstrates the practical advantages of the custom framework approach.

It addresses one of the most complex challenges in industrial networking: analyzing redundancy configurations across multiple devices and network topologies. MRP provides critical redundancy for manufacturing processes, and misconfigurations can result in production downtime, safety concerns, and significant financial losses.

Our technical architecture integrates multiple specialized components:

- Secure edge-to-cloud connections that share only required data to minimize the attack surface

- Advanced preprocessing techniques to help the LLM identify probable root causes by structuring and contextualizing raw network data before analysis

- Integration with comprehensive documentation to offer solutions backed by authoritative sources and proven procedures

The security layer of this architecture, shown in Figure 3, relies on three AWS controls that govern identity, encryption, and content safety for the Bedrock agent:

Figure 3. Multi-layer security architecture for the Bedrock agent integration.

AWS IAM, AWS KMS, and AWS Bedrock Guardrails provide multiple layers of safety controls to prevent harmful outputs. The architecture runs in dedicated AWS accounts per customer, following a single-tenant approach that provides the highest level of security and data isolation. We deploy models in the customer’s preferred region to comply with data residency requirements, and we can also offer deployment via the EU AWS Sovereignty Cloud for customers with the most stringent sovereignty requirements.

The full AWS Bedrock solution architecture:

The Benefits of Integrated Frameworks

User-friendly procedures eliminate the need for complex configurations, LLM hosting decisions, or managing prompt interoperability issues across different AI providers. Customers can focus on their core networking challenges rather than becoming AI infrastructure experts.

Protection of intellectual property becomes straightforward within a managed framework. The custom framework approach allows companies to monetize their expertise while keeping proprietary algorithms and procedures secure from competitors.

AWS provides strong security through encryption for stored and transmitted data, full audit logging, and advanced threat detection — all built into the platform. It would be costly and complicated for most organizations to implement these independently.

With managed services, cost management becomes much easier. Organizations benefit from efficient resource use and clear, predictable pricing with no capital investment required.

Optimal Use Cases for Custom Frameworks

The framework particularly suits organizations building customer-facing products and services where reliability, performance, and user experience directly impact business outcomes. When technical expertise represents competitive advantage, custom frameworks boost monetization while protecting proprietary knowledge and procedures.

For organizations that need strict security and compliance, custom frameworks are often the better fit. Managed services let teams use advanced AI tools without having to build or run the infrastructure themselves — freeing them to allocate more resources to what they do best.

Future Convergence and Industry Evolution

Both approaches will likely coexist as the industry matures. Public documentation and standard procedures may increasingly adopt MCP standards, while proprietary solutions and competitive advantages remain protected within custom frameworks.

The industry may eventually achieve greater standardization in tool and resource connectivity, though LLM prompt interoperability appears unlikely in the near term. This suggests MCP servers may need to maintain multiple prompt variations optimized for different language models, adding operational overhead.

Cloud providers continue to excel in managing complex security infrastructure, compliance requirements, and operational overhead. This advantage may persist as organizations seek to focus on core competencies rather than managing AI infrastructure.

Conclusion

Our real-world experience implementing both MCP and AWS Bedrock Agents reveals that integrating AI agents with enterprise data sources represents more than a technical choice — it reflects fundamental decisions about strategic priorities and organizational capabilities.

Belden’s MCP implementation for HiOS documentation integration demonstrated both the promise and complexity of standardized approaches. While we successfully connected AI agents to our comprehensive technical documentation through AWS Knowledge Bases, the journey revealed significant practical hurdles. The requirement for technical host applications, complex installation procedures, and sophisticated credential management created barriers that limited adoption among our broader user base. However, the recent introduction of Desktop Extensions (DXT) by Claude Desktop addresses many of these usability concerns, suggesting that the MCP ecosystem is rapidly maturing.

Our AWS Bedrock implementation for MRP troubleshooting illustrated the power of integrated frameworks when organizations need complete solutions rather than building blocks. By moving the complexity of security, hosting, data processing, and AI coordination into a managed platform, we transformed what was previously a risky and time-intensive troubleshooting process into an accessible and reliable one.

Success in either approach requires honest assessment of organizational capabilities, clear understanding of use case complexity, and alignment between technical architecture decisions and business objectives. The most successful implementations will be those that recognize these trade-offs and choose the approach that best serves their specific combination of technical requirements, operational constraints, and strategic goals.

Originally published in Computer & Automation magazine.