Why Quadlet Is Different, Part 1: Resource Governance That Compose Can't Express

This series examines the specific technical capabilities — not available in the Compose specification — that make Podman Quadlet a structurally different tool for production workloads on industrial edge hardware. Each capability is mapped to a real operational problem on the factory floor.

The Directive Gap

A companion article on javatask.dev covers how Podman Quadlet maps to the Margo Application Description, the complexity of the specification change, and the device coverage pyramid. This series goes somewhere different: into the specific, line-by-line technical features that Compose cannot provide, structurally, because of its architectural position — sitting on top of the operating system rather than inside it.

This isn’t about Compose being poorly designed. The Compose specification was designed to be portable across container engines, which means it can only expose the lowest common denominator of features those engines support. That’s a reasonable design choice for developer tooling. But it creates a ceiling — and on industrial edge hardware, that ceiling is uncomfortably low.

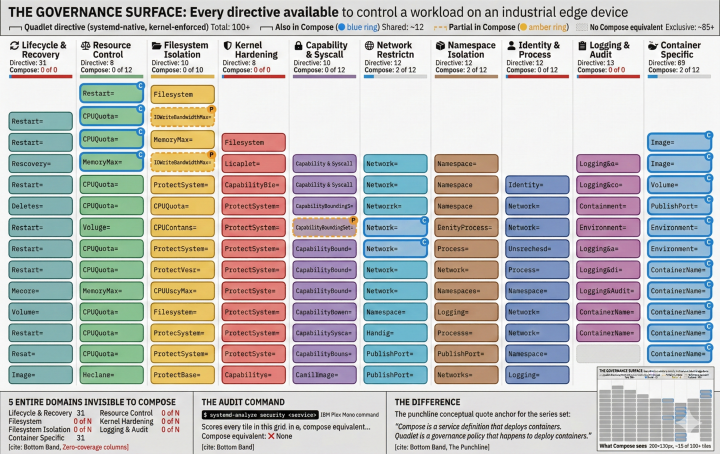

Quadlet files generate native systemd service units. That means every directive available in systemd.exec(5), systemd.resource-control(5), and systemd.service(5) is available in a Quadlet. The core service-management directives alone total approximately 63 — roughly 32 from systemd.exec(5) (execution environment), 21 from systemd.resource-control(5) (cgroup resource governance), and 10 from systemd.service(5) (service lifecycle). Add directives from systemd.unit(5) (dependencies, conditions, failure actions), systemd.kill(5) (signal and shutdown control), and systemd.scope(5), and the total comfortably exceeds 100 distinct security and resource control knobs. Compose exposes roughly 15.

The difference isn’t incremental. It’s structural. And on the industrial edge, it’s the difference between a workload that your OT security team can approve in a week and one that takes months of compensating controls.

This first post focuses on resource governance — the features that protect shared hardware from the workloads running on it.

Why Resource Control Matters More at the Edge

Industrial edge devices almost never run a single workload. A typical gateway runs a protocol adapter, a data historian buffer, an OTEL collector (Margo mandates this), and the actual application workload — all of which share 1–4 GB of RAM and 2–4 CPU cores. Compose gives you hard resource limits. Quadlet gives you a complete resource governance vocabulary.

The distinction matters because on a cloud server, resource contention means degraded performance. On a factory floor, resource contention can mean an SCADA historian missing data, a protocol gateway timing out PLC polling, or an entire device becoming unresponsive, with no on-site IT staff to reboot it.

Soft Limits vs. Hard Kills: MemoryHigh= and MemoryMax=

Compose provides deploy.resources.limits.memory, which maps to a hard cgroup memory limit. When a container exceeds it, the kernel OOM killer terminates the process. On a developer laptop, that’s a restart. On a factory floor, that’s a SCADA historian losing data during a shift change.

Quadlet (via systemd) provides two memory directives:

[Service]

MemoryHigh=200M

MemoryMax=256M

MemoryHigh= is a soft throttle. When the process exceeds this threshold, the kernel aggressively reclaims memory from its caches and applies back-pressure — the process slows down but keeps running. Only if the process reaches MemoryMax= does the OOM killer engage.

This two-tier model is critical for industrial workloads that have periodic memory spikes — a historian buffer flushing to disk, an AI inference model loading a new batch, a protocol adapter handling a burst of OPC-UA subscriptions. The soft limit absorbs the spike. The hard limit protects the system. Compose has no equivalent; it’s hard limit or nothing.

Industrial scenario: An OPC-UA collector aggregating 5,000 sensor tags experiences a 30-second burst when a production line restarts and all tags publish simultaneously. With MemoryHigh=200M, the collector slows down during the burst but keeps running. With Compose’s hard limit alone, it gets killed — and every downstream system loses 30 seconds of process data.

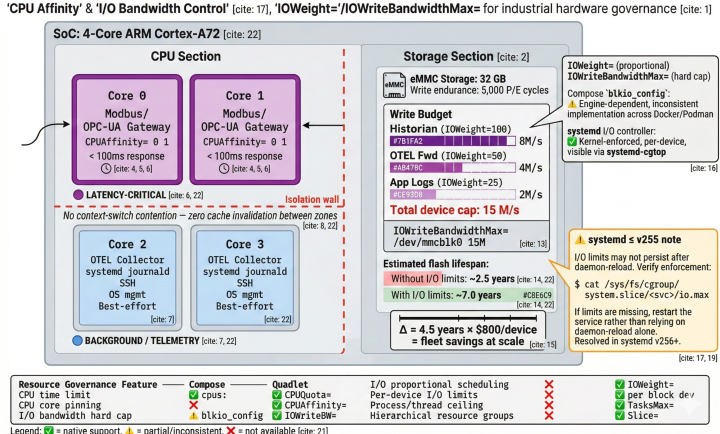

I/O Bandwidth Control: Protecting Flash Storage

Edge devices typically use eMMC or industrial SSD storage with limited write endurance — 3,000 to 10,000 P/E cycles for industrial-grade flash. A misbehaving container that floods the disk with logs or temporary files can measurably shorten device lifespan.

[Service]

IOWriteBandwidthMax=/dev/mmcblk0 5M

IOReadBandwidthMax=/dev/mmcblk0 20M

IOWeight=50

IOWriteBandwidthMax= caps write throughput per block device — not per container, per physical device. This means you can say “this container may write no more than 5 MB/s to the eMMC.” Compose’s blkio_config supports similar syntax, but it’s engine-dependent and inconsistently implemented. The systemd directive is enforced by the kernel’s cgroup v2 I/O controller and visible via systemd-cgtop.

IOWeight= provides proportional I/O scheduling. If two containers compete for the same disk, the one with IOWeight=100 gets twice the bandwidth of one with IOWeight=50. This is qualitatively different from hard limits — it allows bursty workloads to use available I/O when the disk is idle, while guaranteeing fair shares under contention.

⚠️ Operational note — I/O directive persistence (systemd ≤ v255): On distributions shipping systemd v255 or earlier, I/O resource limits such as

IOReadBandwidthMax=may not persist correctly after asystemctl daemon-reload, or may be overwritten if multiple directives target the same block device in conflicting order. After any configuration change, verify enforcement by inspecting the cgroup interface directly:cat /sys/fs/cgroup/system.slice/sensor-gateway.service/io.maxIf the output does not reflect the expected limits, restart the affected service (

systemctl restart sensor-gateway.service) rather than relying on daemon-reload alone. This behavior is resolved in systemd v256+.

Industrial scenario: A DIN-rail gateway runs a local SQLite historian and an OTEL log forwarder. The historian writes 2 MB/s under normal load. The OTEL forwarder occasionally spikes to 10 MB/s when backflushing after a network outage. Without per-device I/O limits, the backflush saturates the eMMC and the historian stalls. With IOWriteBandwidthMax= and IOWeight=, the historian’s writes are prioritized and the OTEL forwarder’s backflush is throttled to what the storage can sustain without wearing it out.

CPU Affinity: Pinning Workloads to Cores

On edge devices running real-time-adjacent workloads — not hard real-time, but latency-sensitive protocol bridging where 50ms matters — CPU cache behaviour is critical. Context-switching a protocol adapter across cores forces cache invalidation and causes latency jitter.

[Service]

CPUAffinity=0 1

CPUQuota=80%

CPUAffinity= pins the container process to specific CPU cores. The Compose specification has no equivalent. You can’t even pass it through docker run flags in a standard Compose file without using engine-specific deploy extensions that break portability.

Industrial scenario: A Modbus/TCP to OPC-UA gateway on a 4-core ARM device must respond to PLC polling within 100ms. Cores 0–1 are reserved for the gateway via CPUAffinity=0 1. Cores 2–3 run the OTEL collector and system services. There is zero contention between the latency-sensitive path and the telemetry pipeline. This isn’t achievable through Compose’s cpus: limit, which constrains total CPU time but doesn’t control which cores execute the work.

Fork Bomb Protection: TasksMax=

Every process that a container forks counts toward system-wide PID limits. A misconfigured or compromised container that spawns thousands of threads can exhaust the system PID table, taking down the entire device — including the SSH daemon you need to diagnose the problem.

[Service]

TasksMax=64

TasksMax= sets a hard ceiling on the number of processes and threads a service can create. It’s enforced by the cgroup PIDs controller. Compose doesn’t expose this — the Docker daemon has a default (which varies by version), but it’s not configurable per service in a Compose file.

Industrial scenario: A third-party vendor ships an edge analytics container with a thread-per-connection model. Under normal operation, it spawns 20 threads. During a network storm, it attempts to spawn 2,000. Without TasksMax=, the device becomes unresponsive. With TasksMax=64, the container hits its ceiling and the connection handler starts rejecting new connections gracefully. The device stays manageable.

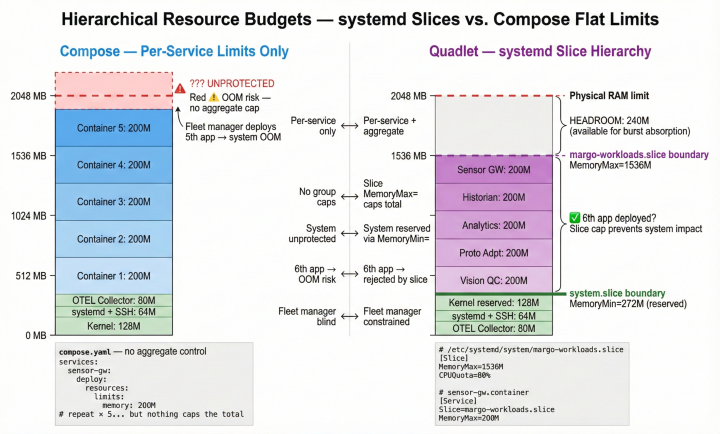

Hierarchical Resource Budgets: systemd Slices

This is perhaps the most significant resource control gap between Compose and Quadlet, and one that has no workaround in the Compose specification.

systemd “slices” group services into hierarchical resource domains. You can create an edge-apps.slice that gets 60% of system RAM and 70% of CPU, and individual Quadlet services inherit from it:

# /etc/systemd/system/edge-apps.slice

[Slice]

MemoryMax=60%

CPUQuota=70%

# sensor-gateway.container

[Service]

Slice=edge-apps.slice

MemoryMax=200M

CPUQuota=40%

The sensor gateway gets at most 200M, but the combined total of all containers in the slice cannot exceed 60% of system memory. This is hierarchical resource budgeting — the same model Kubernetes uses with pod-level limits, but available on single-node devices without Kubernetes.

Compose has no concept of resource groups. Each service gets its own limits, but there’s no mechanism to cap the aggregate. If you deploy five containers each limited to 200M, they can collectively consume 1 GB — potentially starving the OTEL collector, the SSH daemon, or the systemd journal.

Industrial scenario: A device vendor ships a Margo-compliant gateway with 2 GB RAM. The vendor guarantees that 512 MB is reserved for system functions (kernel, systemd, OTEL, SSH). They define a margo-workloads.slice with MemoryMax=1536M. No matter how many Margo applications the fleet manager deploys, the workload aggregate cannot breach 1.5 GB. The system functions are protected by architecture, not by hoping every application vendor set their limits correctly.

What Compose Can and Cannot Do — Resource Summary

✅ = native support · ⚠️ = partial/inconsistent · ❌ = not available

Up Next

Resource limits specify how much each workload can consume. But they don’t answer the harder question: what is each workload allowed to do?

In Part 2, we go into the cybersecurity directives that Quadlet inherits from systemd — filesystem isolation, kernel attack surface reduction, network microsegmentation, syscall filtering, and the systemd-analyze security scoring tool that gives IEC 62443 auditors a single-command verification for every workload on the device.

→ Continue to Part 2: Defense-in-Depth Cybersecurity at the Service Level

This series accompanies a Margo specification enhancement proposal for quadlet.v1 as a deployment profile. Discussion and feedback welcome on discourse.margo.org and the Margo specification GitHub.