Why Quadlet Is Different, Part 3: Production Lifecycle on the Factory Floor

How It Starts, Stops, and Recovers Matters

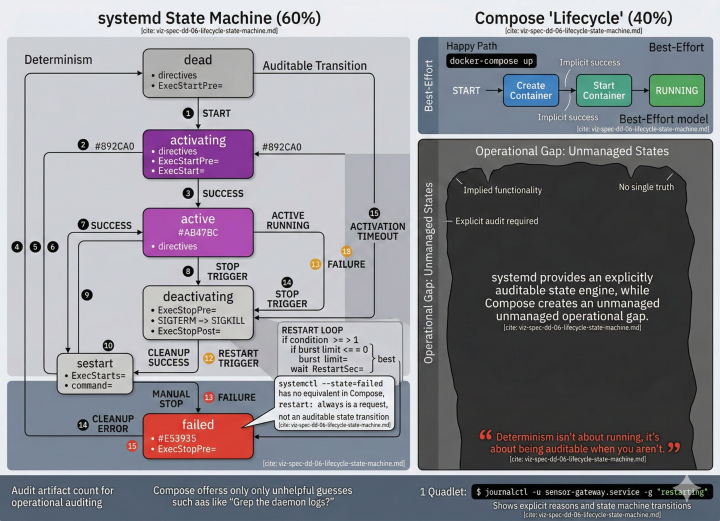

Part 1 covered resource governance — the directives that protect shared hardware from its workloads. Part 2 covered cybersecurity — the defense-in-depth layers that contain a compromised workload. This final post addresses the question that OT engineers care about most: what happens when things go wrong at 3 AM with nobody on-site?

On a cloud server, lifecycle management means restart policies and health checks. On a factory floor, it means: does the protocol bridge know that the hardware watchdog died? Does the historian flush its buffer before the power cut? Does the vision QC system trigger the safety relay when its container crashes? Does the serial adapter container remain quiet on gateways without the USB expansion module?

These are lifecycle behaviours. Compose handles the first one (restart policies) and part of the second (health checks). Quadlet handles all of them — because systemd was designed to manage system services on machines that must run unattended for years, which is exactly what industrial edge devices are.

Application-Level Readiness: Type=notify and sd_notify

Compose healthchecks poll. The healthcheck directive in a Compose file tells the engine to periodically execute a command inside the container and check the exit code. This is pull-based and introduces latency — if the healthcheck interval is 10 seconds, dependents may wait up to 10 seconds before learning the service is ready.

systemd supports Type=notify, where the service pushes the readiness status via the sd_notify protocol:

[Service]

Type=notify

NotifyAccess=main

WatchdogSec=30s

When a Type=notify service starts, systemd does not consider it “active” until the process explicitly sends READY=1 via the sd_notify socket. This means the application itself determines when it’s ready — after the database connection is established, after the initial configuration is loaded, after the sensor subsystem is initialised. Dependent services wait for exactly the right signal, not a periodic probe.

WatchdogSec=30s adds continuous kernel-level liveness monitoring. The process must send WATCHDOG=1 periodically; if it doesn’t, systemd considers the service failed and triggers the restart policy. This is fundamentally different from Compose healthchecks — it’s not polling, it’s a deadline-based watchdog enforced by PID 1. If the process enters an infinite loop or deadlocks, the watchdog fires even though the process is technically “running.”

Industrial scenario: An OPC UA server takes 15–45 seconds to initialise, depending on the number of configured nodes. With Compose, you either set a generous healthcheck interval (for slow-startup detection) or a short one (for false positives during initialisation). With Type=notify, the server sends READY=1 after exactly the time it needs — 15 seconds or 45 seconds — and dependent services start immediately after.

Deterministic Shutdown: Two Timeouts, Two Layers

In OT environments, how a service stops matters as much as how it starts. An MQTT bridge that’s killed mid-publish can corrupt the message buffer. A historian that’s terminated without flushing can lose the last 30 seconds of data.

Quadlet exposes two distinct shutdown timeouts that operate at different layers of the stack:

[Container]

StopTimeout=45

[Service]

TimeoutStopSec=60s

KillMode=mixed

FinalKillSignal=SIGKILL

Why two timeouts? Podman applies its own internal shutdown sequence when stopping a container. By default, Podman sends SIGTERM and waits 10 seconds before escalating to SIGKILL — regardless of what systemd has configured. The StopTimeout= key in the [Container] section controls this Podman-internal deadline. If your application needs 45 seconds to flush a historian buffer, but you only set TimeoutStopSec=60s without StopTimeout=, Podman will SIGKILL the container after 10 seconds — well before the systemd timeout expires.

The two timeouts must be coordinated:

StopTimeout=45(in[Container]): Tells Podman to wait 45 seconds after SIGTERM before sending SIGKILL to the container process. This is the window your application has for graceful shutdown — flushing buffers, closing database connections, publishing last-will messages.TimeoutStopSec=60s(in[Service]): Tells systemd to wait 60 seconds for the entirepodman stopcommand to complete before forcibly killing the Podman process itself. This should be set longer thanStopTimeout=to give Podman time to complete its internal shutdown sequence plus cleanup.

KillMode=mixed sends SIGTERM to the main process but SIGKILL to remaining child processes after the timeout. This ensures that the main application gets its graceful shutdown window while zombie child processes don’t prevent the service from restarting.

⚠️ Operational note: A common misconfiguration is setting

TimeoutStopSec=withoutStopTimeout=. In this case, Podman’s default 10-second internal timeout silently overrides the systemd grace period, and the container is killed long before the operator expects. Always set both, withStopTimeout<TimeoutStopSec, to ensure the application receives the intended shutdown window.

Compose supports stop_grace_period, but it’s interpreted by the Compose engine, not by PID 1. If the Compose daemon is under memory pressure or is itself being restarted, the grace period may not be honored. Compose also has no mechanism to address the two-layer timeout problem — there is only one timeout to configure.

Industrial scenario: A historian buffer holds 45 seconds of unwritten sensor data. The device receives a firmware update that requires a service restart. With StopTimeout=45 and TimeoutStopSec=60s, the historian process gets its full flush window inside the container, and Podman gets 15 additional seconds for cleanup. KillMode=mixed ensures child processes (log rotators, health reporters) that don’t exit cleanly are forcefully reaped so the restart proceeds. With Compose, the single stop_grace_period is a best-effort request to a daemon that may itself be under pressure, and there’s no visibility into the container runtime’s internal timeout.

Hard Lifecycle Coupling: BindsTo=

systemd’s BindsTo= directive creates a hard lifecycle coupling between services. If service A BindsTo= service B, and service B stops or fails, service A is immediately stopped.

[Unit]

Description=MQTT Protocol Bridge

BindsTo=hardware-watchdog.service

After=hardware-watchdog.service

[Container]

Image=oci.example.com/margo/mqtt-bridge:2.1.0

Compose’s depends_on ensures startup ordering, but it does not enforce runtime coupling. If a dependent service fails, the services that depend on it keep running. In OT environments, this can be dangerous — if the hardware watchdog service dies, the protocol bridge should be stopped immediately, because the device no longer has the ability to self-recover from faults.

BindsTo= also works with non-container services. A Quadlet container can be bound to a physical network interface target (sys-subsystem-net-devices-eth0.device), a mount point, or a hardware watchdog timer. Compose’s dependency model is limited to services within the Compose file — it cannot express dependencies on system-level resources.

Industrial scenario: A protocol gateway container is bound to both the hardware watchdog and the physical Ethernet interface connecting to the PLC network. If the watchdog daemon crashes, the gateway stops immediately — preventing a state where the bridge runs without self-recovery capability. If the Ethernet interface goes down (cable pull, switch failure), the gateway stops rather than queuing messages into a buffer that will overflow. Compose cannot express either dependency because both targets exist outside the container world.

Conditional Execution: Run Only When Hardware Is Present

[Unit]

ConditionPathExists=/dev/ttyUSB0

ConditionMemoryTotal=512M

AssertPathExists=/sys/class/gpio

systemd conditionals prevent a service from starting if prerequisites aren’t met — without failing. ConditionPathExists=/dev/ttyUSB0 means the serial adapter container won’t start if the USB device isn’t plugged in. It won’t error. It won’t consume resources trying and retrying. It simply doesn’t start.

This is essential for industrial hardware where devices have optional expansion modules. The same Quadlet archive can be deployed to all gateways in a fleet, but the service only activates on devices where the relevant hardware is present. The Compose specification has no conditional execution mechanism — every defined service is expected to start.

Industrial scenario: A fleet of 500 identical gateways serves a factory. 200 of them have a serial expansion module for legacy Modbus RTU devices. The Margo fleet manager deploys the same Quadlet archive to all 500. On the 200 devices with /dev/ttyUSB0, the serial adapter starts. On the other 300, it doesn’t — silently, cleanly, without errors or restart loops. With Compose, you’d need either two different Compose files (doubling fleet management complexity) or a wrapper script that checks for hardware before starting the service (moving logic outside the specification).

Failure Actions: OnFailure= and OnSuccess=

[Unit]

OnFailure=send-alert@%n.service

OnSuccess=log-completion@%n.service

When a Quadlet service fails, systemd can automatically trigger another service — an alert sender, a diagnostic collector, a fallback mode activator. This is event-driven failure handling built into the init system. Compose has restart policies (restart: always, restart: on-failure), but no mechanism to trigger cross-service actions on failure.

Industrial scenario: A vision quality-inspection container fails. OnFailure= triggers safety-fallback.service, which activates a hardware relay to divert the production line to manual inspection. The fallback is not a container — it’s a native script that toggles a GPIO pin. Compose cannot express this because the fallback action exists outside the container world.

This pattern — container fails → non-container action executes — is the intersection of IT and OT that industrial edge computing lives in. Compose’s world ends at the container boundary. systemd’s world encompasses the entire machine, including the hardware it’s wired to.

Lifecycle Capabilities Compose Cannot Express — Summary

What This All Means for Margo

Across three posts, we’ve walked through the specific technical capabilities that Quadlet inherits from systemd and Compose structurally cannot provide:

Resource governance (Part 1): Soft memory throttles that absorb bursts without killing processes. CPU core pinning for latency-sensitive protocol bridging. I/O bandwidth limits that protect flash storage lifespan. Hierarchical slice budgets that cap aggregate workload consumption and protect system functions by architecture.

Cybersecurity (Part 2): Seven layers of defense-in-depth — filesystem isolation, kernel surface reduction, network microsegmentation, syscall filtering — all declared in the same file, all scored by systemd-analyze security, all mapped to IEC 62443 foundational requirements. With a documented pattern for maintaining full hardening under rootless operation.

Production lifecycle (Part 3): Push-based readiness, kernel watchdog supervision, two-layer shutdown timeouts that coordinate Podman and systemd, hard coupling to hardware services, conditional execution for heterogeneous fleets, and event-driven failure actions that can trigger safety relays through GPIO pins.

The features aren’t theoretical. They’re available today in Podman 5.x on every major enterprise Linux distribution. They require no additional software, no daemon, and no changes to OCI images.

Adding quadlet.v1 as a Margo deployment profile doesn’t just extend device coverage (as described in the companion proposal). It extends the security, resource governance, and lifecycle vocabulary available to Margo-compliant workloads. Application vendors can ship hardened Quadlet files alongside their Compose archives, and device vendors can enforce fleet-wide resource budgets through systemd slices — something the Compose specification cannot express at any abstraction level.

The industrial edge needs more than container deployment. It needs container governance. Quadlet provides the levers. The question for the Margo community is whether we want to offer those levers through the specification, or leave each device vendor to reinvent them outside it.

This series accompanies a Margo specification enhancement proposal for quadlet.v1 as a deployment profile. Discussion and feedback welcome on discourse.margo.org and the Margo specification GitHub.